Despite Musk’s claims vehicles have crashed because they did not properly evaluate their environment

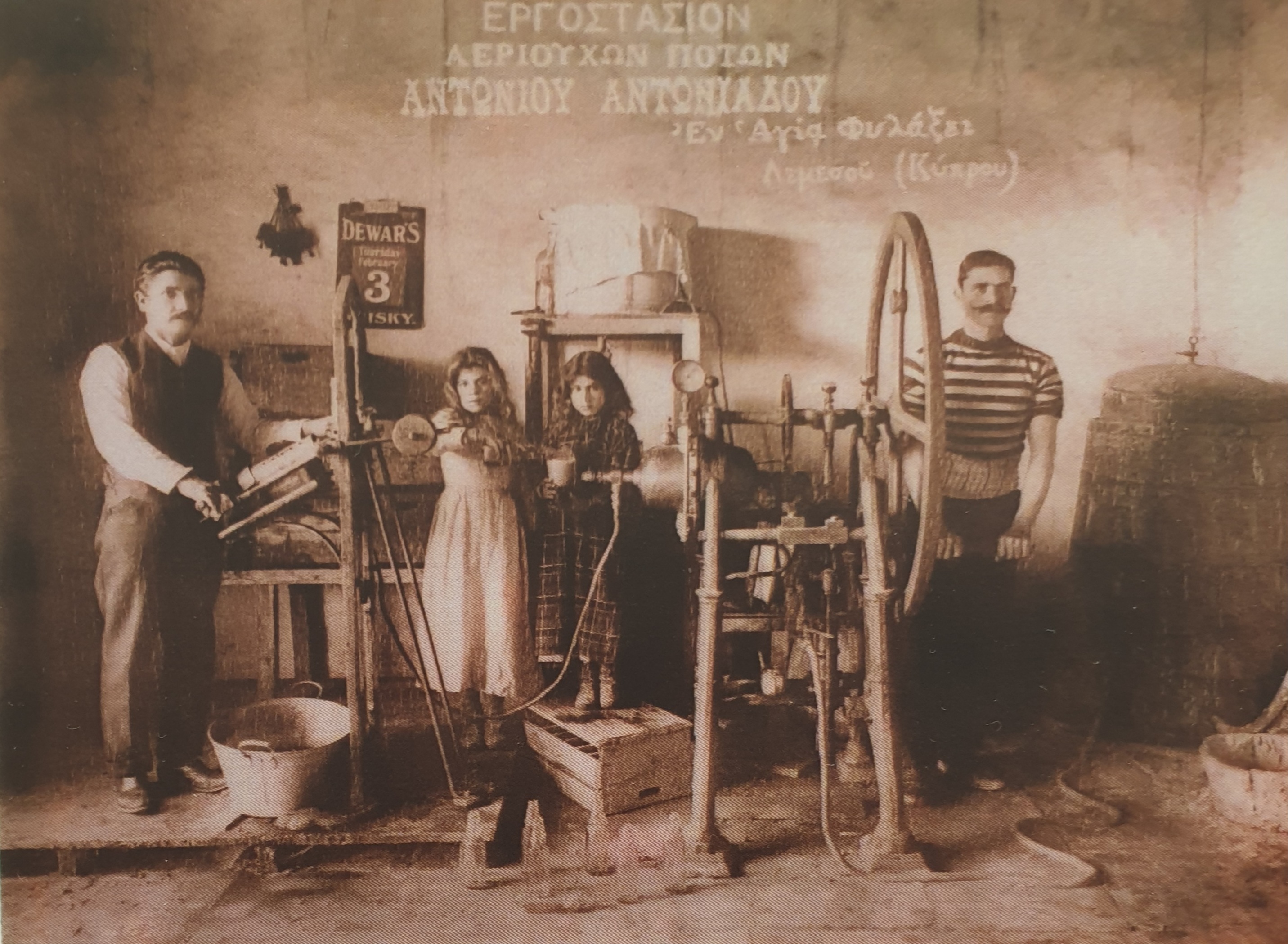

Back in August, the Cyprus Mail reported on the rising popularity of Tesla cars on the island, with their autonomous driving capabilities cited as one of the reasons people are drawn to them.

Tesla owner Elon Musk has made some big claims for his company’s vehicles’ ability to drive themselves. This is also reflected in public perception of the company, with the vast majority of people believing that Teslas are uniquely equipped to execute autonomous driving.

In 2016, Tesla announced the first fatality recorded while their Autopilot feature was engaged on any of their vehicles, a Model S. The accident involved a semi-truck making a turn ahead of the Model S and the car colliding with the trailer.

Tesla was defensive of the Autopilot feature at the time, citing fatality statistics to show that fatalities involving traditional driving were a much more frequent occurrence.

“This is the first known fatality in just over 130 million miles where Autopilot was activated. Among all vehicles in the US, there is a fatality every 94 million miles. Worldwide, there is a fatality approximately every 60 million miles”, a Tesla blog post read.

The post went on to explain the reason for the collision, saying that neither the driver nor the Autopilot were able to detect the trailer in front of them.

“Neither Autopilot nor the driver noticed the white side of the tractor trailer against a brightly lit sky, so the brake was not applied. The high ride height of the trailer combined with its positioning across the road and the extremely rare circumstances of the impact caused the Model S to pass under the trailer, with the bottom of the trailer impacting the windshield of the Model S,” the post explained.

Although Tesla has made improvements to its Autopilot feature both before and after the 2016 fatality, more have occurred. Most notably, a 2019 fatality in Florida was practically identical to the one three years prior. A Model 3 sedan crashed into the back of a truck while both were travelling on the highway.

It is somewhat unfair to single out Tesla for their Autopilot feature but it’s all a matter of framing. Tesla’s self-driving feature is a tremendous safety feature utilising a number of sensors and the car’s onboard computer to construct a complex and detailed picture of what’s happening around the vehicle. However, Musk’s intent on pushing the ‘fully autonomous’ angle invites further scrutiny, particularly when there are elements of mixed messaging in his proclamations.

In April of 2019, the Tesla CEO spoke during a presentation on what was the company’s first ‘Autonomy Day’ event. In that presentation, Musk attacked LIDAR technology, while praising the method Tesla uses in its vehicles to implement self-driving, and made a prediction that there would be one million Tesla vehicles running as ‘robotaxis’ (think driverless Uber vehicles) within 2020.

Has Musk’s claim about Teslas being used as robotaxis come to fruition? In short, no. When Musk provided an update in April 2020 on the mooted rollout of robotaxis, he was positive but vague.

“Functionality is still looking good for this year. Regulatory approval is the big unknown,” he said.

Which in all honesty does not indicate a great deal of anything. In fact, even in that aforementioned 2019 presentation, Musk dialed down on the assuredness of his claims, saying that “we just threw some numbers on there” when further questioned on the robotaxi plan during the question and answer section. Moreover, when asked by an investor about the liable party in the event someone got injured while riding a robotaxi, Musk said that the liability would probably fall on Tesla.

In terms of the technology being used and Musk’s attack on LIDAR, there is still some debate here. Briefly, LIDAR technology, which is currently being used by other autonomous vehicles such as those by Uber, Waymo, and Toyota, is similar to radar technology. It emits lasers and measures how long they take to return, effectively mapping the environment around them. Although the technology is very accurate, it is still being refined and has a high cost of implementation, roughly $10,000 per vehicle.

Tesla, on the other hand, uses cameras, radars, sensors and machine learning to implement its Autopilot feature. Again, in very simple terms, Tesla’s method is akin to trying to simulate human vision and the brain’s ability to analyse visual signals being sent to it. Machine learning means that, at least in theory, the Autopilot feature will get better with time as it records and analyses more visual information.

So was Musk right about Tesla’s technology being superior? We’ll jot this down as tentative yes, but there are caveats. Tesla’s autonomous driving system has three major benefits. Firstly it’s adaptive and updatable, allowing for steady and continuous improvement at theoretically faster intervals. For example in August 2020, Musk promised a ‘quantum leap’ upgrade to Tesla’s self-driving system, promising “a fundamental architectural rewrite, not an incremental tweak” was coming in a limited public release over the coming months.

Secondly, it can differentiate between objects better than base applications of LIDAR, meaning that it’s not just about detecting an object in front of the car, but also being able to understand what kind of object it is. For example, a car should not perform an emergency stop if a plastic bag is in the way.

And thirdly, Tesla’s application has already been adopted by the public, with customers inadvertently helping Tesla perform millions of miles of real-world testing. This sort of data gathering and real-world condition testing is invaluable.

However, the answer is less straightforward. If we revisit the Tesla fatalities, the limitations of the system come back under the microscope. Analysts from Laser Market Research have explained that “the fact remains, the crashes occurred because the Tesla [vehicle] likely didn’t correctly detect its environment”.

Also, it would be somewhat premature and rushed to dub either technology as the one true way forward. It would not be a major surprise if at least one company combines the two technologies for a more well-rounded self-driving application, although cost is still a major obstacle to this.

More recently, autonomous driving competitor Waymo stated that it would clarify its messaging around self-driving, saying that it would no longer use the phrase so that it doesn’t confuse the public.

“We’re hopeful that consistency will help differentiate the fully autonomous technology Waymo is developing from driver-assist technologies (sometimes erroneously referred to as ‘self-driving’ technologies) that require oversight from licensed human drivers for safe operation,” the company said, in what was clearly a message to Tesla and Musk’s bold claims around the technology.

Waymo CEO John Krafcik spoke to Bloomberg only a few weeks after Waymo’s press release, speaking even more bluntly about Tesla. “It is a misconception that you can simply develop a driver-assistance system further until one day you can magically jump to a fully autonomous driving system,” said Krafcik.

Under the SAE’s (Society for Automotive Engineering) definitions for self-driving vehicles, Tesla vehicles are currently listed as qualifying for Level 2 automation. Despite having Level 4 characteristics, such as the ability to drive autonomously “but limited to specific locations and/or conditions”, they drop down to Level 2 because “the driver must still maintain full vigilance”.

Click here to change your cookie preferences